AI solutions

What we do

Services

Experts in

How we work

Quality standards are not one-size-fits-all. What excellence means is different for an ERP platform, an early-stage MVP, and a social media app.

That’s why we take a personalized approach to each project and start by aligning on the product vision.

Transparency is another aspect of quality control. Full visibility into the development process eliminates the wait-and-see stress for you while

giving us a chance to validate work in progress and adjust quickly to feedback. We maintain this level of openness in several ways:

“A PM is the point person for most questions. But when a quick technical detail needs to be clarified, direct communication helps resolve it in minutes. Nobody knows the logic of a specific feature better than the developers building it.”

“When you can see behind the scenes of every sprint, it takes the stress out of the process. You don’t have to wait for a formal report to see how your project’s doing.”

“Insights from demos help us steer development in the right direction and refine features based on real-world implementations rather than just static documentation. For a client, it’s another trust factor. You can see what’s already done and have constant proof that your project is on the right track.”

“We’d love a world where every plan stays exactly the same from start to finish. But in the real world, it’s a rare scenario. Being able to adjust midway is what helps us a lot with ensuring the quality of the final result.”

“A PM is the point person for most questions. But when a quick technical detail needs to be clarified, direct communication helps resolve it in minutes. Nobody knows the logic of a specific feature better than the developers building it.”

“When you can see behind the scenes of every sprint, it takes the stress out of the process. You don’t have to wait for a formal report to see how your project’s doing.”

“Insights from demos help us steer development in the right direction and refine features based on real-world implementations rather than just static documentation. For a client, it’s another trust factor. You can see what’s already done and have constant proof that your project is on the right track.”

“We’d love a world where every plan stays exactly the same from start to finish. But in the real world, it’s a rare scenario. Being able to adjust midway is what helps us a lot with ensuring the quality of the final result.”

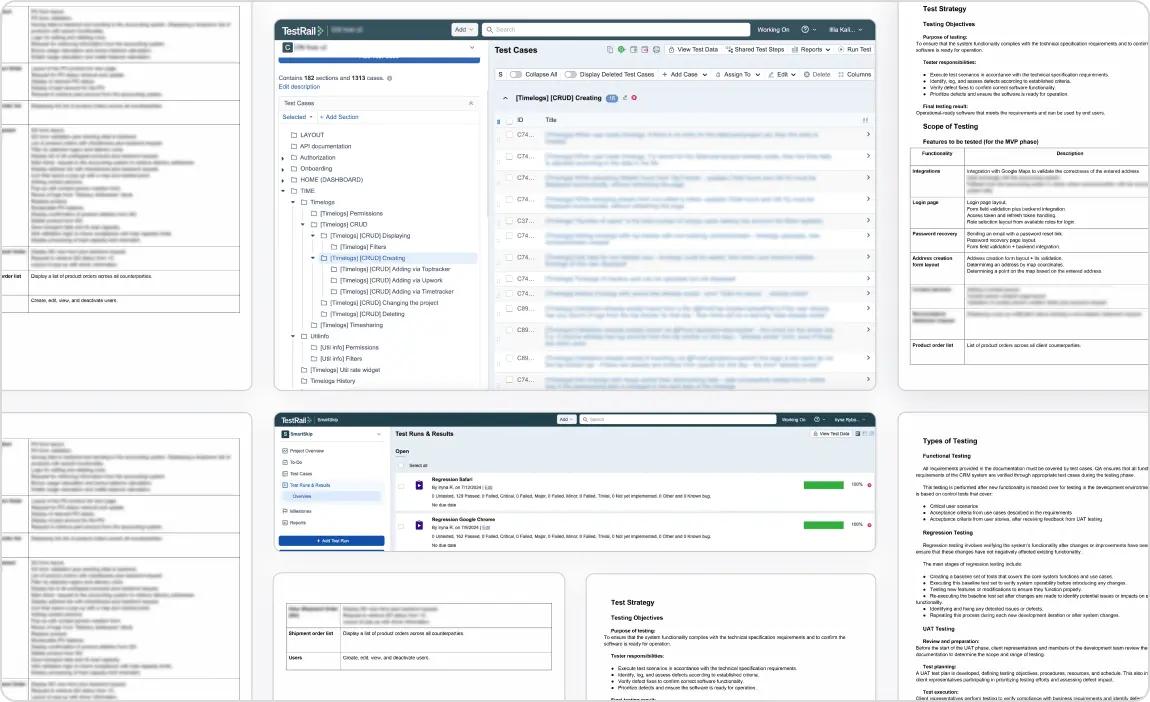

Before any testing starts, we set up a QA system that fits your product.

We test every feature to ensure it meets requirements, with no broken flows or missing logic.

We verify that your app runs fast, stays secure, and remains stable as your traffic scales.

Using your app should feel effortless. We test expected user paths to ensure everything clicks into place.

Users will make mistakes. We make sure your app doesn’t fall apart when they do.

Your app should work well on all major browsers. We test it so that users don’t have to deal with weird bugs.

We test across different platforms (iOS, Android, web) to ensure your app looks great and works perfectly wherever it’s used.

New code shouldn’t trigger old problems. We test past features to ensure they still work after adding new ones.

We check visuals, interactions, and navigation to make sure your app is intuitive and frustration-free.

If there’s an issue, we have a structured way to handle it:

With this approach, issues can be fixed without endless clarifications.

When an issue is fixed, we retest to confirm it’s truly resolved, run regression testing to ensure nothing else has broken as a result of the fix, and evaluate overall product stability and risk.

“High-impact issues go straight to the top of our backlog. After fixing them, we extend test cases and automation to cover the original failure scenario so the same problem doesn’t repeat in future releases.”

When the development scope is done, we provide a test report with structured insights on what was tested, what works, what needs attention, and whether the product is ready for release:

Before launch, every release goes through a final quality gate:

Nothing is approved for release until it meets the agreed quality and stability criteria.

Before public release, we support user acceptance testing. This lets your team and selected users:

We help organize test scenarios, collect feedback, and translate it into actionable improvements so that final adjustments are based on real-world use.

You’ll receive a complete handover package that allows your internal teams (or new vendors) to take over without losing time or context. We provide:

Usually, our QA engineer, tech lead, and PM look at the product scope and requirements together. We consider things like system complexity, integrations, release plans, and where failures would hurt the most. Our goal is to cover everything that matters for your product, and if we see that certain testing activities won’t add value in your case, we skip them. After the plan is ready, we share it with you for alignment before execution.

The first thing we look at is impact. If it’s something that affects core functionality, security, or data, it becomes the top priority and we fix it before release. If it’s a smaller issue, we talk it through with you and decide whether it should block the launch or go into the next iteration. The decision always comes down to user impact and product stability.

Not really. In many cases, good testing actually saves money because problems are found earlier, when they’re still cheap to fix. What matters more is how testing is organized. Choosing the right coverage and tools, rather than simply adding more testing hours, is key.

We don’t make decisions in isolation. We bring clear documentation to the discussion so you can see the impact. Our test reports show what functionality has been validated and whether it meets requirements. If QA activities need to be adjusted, we transparently explain the potential risks and what quality gaps could appear.

Then, we review the available options together and agree on the approach that makes the most sense for your situation, balancing stability, scope, and deadline.

We choose the approach based on project scope and complexity. For smaller projects, manual testing is usually the best option, while larger or fast-growing products benefit from automation for core functionality and regression coverage.

We always discuss a testing approach at the start and show how different levels of automation affect the overall cost. If needed, we can start with manual testing and introduce automation later, when it makes sense.